HOME

Installation

This chapter guides you through the entire setup process required to use artemis_crib effectively. By following the steps outlined in the subchapters, you will configure all the necessary tools, environments, and directories to ensure a seamless workflow for analysis tasks.

Below is an overview of the setup process, along with links to detailed instructions for each step.

-

Ensure all required compilers, libraries, and dependencies are installed for compatibility with ROOT and artemis.

-

Set up a Python environment using tools like

uvto support pyROOT and TSrim. -

Install ROOT from source and configure it for use with artemis.

-

Clone, build, and configure artemis.

-

Set up tools for energy loss calculations, essential for analyzing experimental data.

-

Configure NFS mounts to access remote file servers or external storage.

-

Create and configure the

art_analysisdirectory, which serves as the workspace for all analysis tasks.

Requirements

This page outlines the packages required to run artemis_crib, including ROOT. Since most of the code is based on ROOT, please refer to the official ROOT dependencies for more information.

Compilers

- C++17 support is required

- gcc 8 or later is supported

- (currently Clang is not supported)

Required packages

The following is an example command to install the packages typically used on an Ubuntu-based distribution:

sudo apt install binutils cmake dpkg-dev g++ gcc libssl-dev git libx11-dev \

libxext-dev libxft-dev libxpm-dev libtbb-dev libvdt-dev libgif-dev libyaml-cpp-dev \

htop tmux vim emacs wget curl build-essential

- The first set of packages (e.g.,

binutils,cmake,g++) includes essential tools and libraries required to compile and run the code. libyaml-cpp-dev: A YAML parser library used for configuration and data input.htop,tmux,vim,emacs,wget,curl: Optional but commonly used tools for system monitoring, session management, and file downloads, making the environment more convenient for server-side analysis.build-essential: A meta-package that ensures essential compilation tools are installed.

To use pyROOT or the Python interface of TSrim, Python must be installed.

Although Python can be installed using a package manager like apt (sudo apt install python3 python3-pip python3-venv), it is recommended to use tools such as pyenv to create a virtual environment.

Popular tools for managing Python environments include:

If you plan to use pyROOT, ensure that the Python environment is fully set up before proceeding to the next section. Instructions for setting up the Python environment are available in the Python Setting section.

CRIB analysis machine specifications

The functionality of artemis_crib has been confirmed in the following environment:

- Ubuntu 22.04.4 (LTS)

- gcc 11.4.0

- cmake 3.22.1

- ROOT 6.30/04

- yaml-cpp 0.7

- artemis commit ID a976bb9

Python Environments (option)

By installing Python, you can use pyROOT and TSrim directly from Python. However, Python is not required to use artemis_crib, so you may skip this section if you do not plan to use Python.

Why Manage Python Environments?

Managing Python environments and dependencies is crucial to avoid compatibility issues. Some configurations may work in specific environments but fail in others due to mismatched dependencies. To address this, we recommend using tools that handle dependencies efficiently and isolate environments.

Popular Tools for Python Environment Management

| Tool | Description |

|---|---|

| pyenv | Manages multiple Python versions and switches between them on the same machine. |

| poetry | A dependency manager and build system for Python projects. |

| pipenv | Combines pip and virtualenv for managing dependencies and virtual environments. |

| mise1 | Runtime manager (e.g., Python, Node.js, Java, Go). Ideal for multi-tool projects. |

| uv | A fast Python project manager (10-100x faster than pip), unifying tools like pip, poetry, and pyenv. |

The author (okawak) uses a combination of mise and uv to manage Python environments.

If your projects involve multiple tools, such as Python and Node.js, mise is highly effective for unified management.

However, if you work exclusively with Python, uv is a simpler and more focused option.

Using uv for a Global Python Environment

This section explains how to use uv to set up a global Python environment, required for tools like pyROOT. uv typically creates project-specific virtual environments, but here we focus on configuring a global virtual environment. For other methods, refer to the respective tool's documentation.

Step 1: Install uv

Install uv using the following command:

curl -LsSf https://astral.sh/uv/install.sh | sh

Follow the instructions to complete the installation and configure your environment (e.g., adding uv to PATH).

Verify the installation:

uv --version

Step 2: Install Python using uv

Install a specific Python version:

uv python install 3.12

-

Replace

3.12with the desired Python version. -

To view available versions:

uv python list

Currently, Python installed via uv cannot be globally accessed via the python command.

This feature is expected in future releases.

For now, use uv venv to create a global virtual environment.

Step 3: Create a Global Virtual Environment

To create a global virtual environment:

cd $HOME

uv venv

This creates a .venv directory in $HOME.

Step 4: Persist the Environment Activation

Edit your shell configuration file to activate the virtual environment at startup:

vi $HOME/.zshrc

Add:

# Activate the global uv virtual environment

if [[ -d "$HOME/.venv" ]]; then

source "$HOME/.venv/bin/activate"

fi

Apply the changes:

source $HOME/.zshrc

Verify the Python executable:

which python

Ensure the output is .venv/bin/python.

Step 5: Add Common Packages

Install commonly used Python packages into the virtual environment:

uv pip install numpy pandas

Additional information

- For more detail, refer to the uv documentation.

ROOT

The artemis and artemis_crib tools are built based on the ROOT library. Before installing these tools, you must install ROOT on your system.

Why Build ROOT from Source?

Since artemis and artemis_crib may depend on a specific version of ROOT, it is recommended to build ROOT from source rather than using a package manager. This approach ensures compatibility and access to all required features.

Steps to Build ROOT from Source

-

Navigate to the directory where you want to install ROOT:

cd /path/to/installation -

Clone the ROOT repository:

git clone https://github.com/root-project/root.git root_src -

Checkout the desired version (replace

<branch name>and<tag name>with the specific version):cd root_src git switch -c <branch name> <tag name> cd .. -

Create build and installation directories, and configure the build:

mkdir <builddir> <installdir> cd <builddir> cmake -DCMAKE_INSTALL_PREFIX=<installdir> -Dmathmore=ON ../root_src- Set

mathmoretoONbecause artemis relies on this library for advanced mathematical features.

- Set

-

Compile and install ROOT:

cmake --build . --target install -- -j4- Adjust the

-joption based on the number of CPU cores available (e.g.,-j8for 8 cores) to optimize the build process.

- Adjust the

-

Set up the ROOT environment:

source <installdir>/bin/thisroot.sh- Replace

<installdir>with the actual installation directory. - Running this command loads the necessary environment variables for ROOT.

- Replace

Persisting the Environment Setup

To avoid running the source command manually each time, add it to your shell configuration file (e.g., .zshrc or .bashrc):

echo 'source <installdir>/bin/thisroot.sh' >> ~/.zshrc

source ~/.zshrc

This ensures that the ROOT environment is automatically loaded whenever a new shell session starts.

Important Note for pyROOT Users

If you plan to use pyROOT, make sure your Python environment is set up before proceeding with the ROOT installation. Refer to the Python Setting section for detailed instructions on setting up Python and managing virtual environments.

Additional information

- For more details and troubleshooting, consult the official ROOT installation guide.

- Ensure your system meets all prerequisites listed in the ROOT documentation, including necessary libraries and tools.

- Manage ROOT versions appropriately to maintain compatibility with your analysis environment and dependent tools.

Artemis

This section provides detailed instructions for installing artemis, which serves as the foundation for artemis_crib. While artemis_crib is specifically customized for experiments performed at CRIB, artemis is a general-purpose analysis framework.

Steps to Install artemis

-

Navigate to the directory where you want to install artemis:

cd /path/to/installation -

Clone the artemis repository:

git clone https://github.com/artemis-dev/artemis.git cd artemis -

Switch to the develop branch: The

developbranch is compatible with ROOT version 6 and is recommended for installation.git switch develop -

Create a build directory and configure the build: You can customize the build with the following options:

mkdir build cd build cmake -DCMAKE_INSTALL_PREFIX=<installdir> ..CMake Configuration Options

Option Default Value Description -DCMAKE_INSTALL_PREFIX./installSpecifies the installation directory. Replace <installdir>with your desired directory.-DBUILD_GETOFFEnables or disables building the GET decoder. If ON, specify the GET decoder path using-DWITH_GET_DECODER.-DWITH_GET_DECODERNot Set Specifies the path to the GET decoder. Required when -DBUILD_GET=ON.-DCMAKE_PREFIX_PATHNot Set Specifies paths to yaml-cpporopenMPI. If not found automatically, you must set it manually. Note thatyaml-cppis required, but MPI support will be disabled ifopenMPIis missing.-DBUILD_WITH_REDISOFFEnables or disables Redis integration. -DBUILD_WITH_ZMQOFFEnables or disables ZeroMQ integration. Example: Customized Configuration Command

cmake -DCMAKE_INSTALL_PREFIX=/path/to/installation -DBUILD_GET=ON -DWITH_GET_DECODER=/path/to/decoder -DBUILD_WITH_REDIS=ON -DBUILD_WITH_ZMQ=ON .. -

Compile and install artemis:

cmake --build . --target install -- -j4- Adjust the

-joption based on your system's CPU cores (e.g.,-j8for 8 cores).

- Adjust the

-

Set up the artemis environment: After installation, a script named

thisartemis.shwill be generated in the installation directory. Run the following command to set up the environment variables:source <installdir>/bin/thisartemis.sh

Persisting the Environment Setup

To avoid running the source command manually every time, add it to your shell configuration file (e.g., .zshrc or .bashrc):

echo 'source <installdir>/bin/thisartemis.sh' >> ~/.zshrc

source ~/.zshrc

This ensures that the artemis environment is automatically loaded when a new shell session starts.

Further Information

- For additional details about artemis, visit the artemis GitHub repository.

Energy Loss Calculator

TSrim is a ROOT-based library, derived from TF1, designed to calculate the range or energy loss of ions in materials using SRIM range data.

Unlike directly interpolating SRIM's output files, TSrim fits a polynomial function to the log(Energy) vs. log(Range) data for specific ion-target pairs, ensuring high performance and accuracy.

At CRIB, tools like enewz and SRIMlib have also been developed for energy loss calculations. Among them, TSrim, developed by S. Hayakawa, stands out for its versatility and is supported in artemis_crib.

Prerequisites

- C++17 or later: Required for compilation.

- ROOT installed: Ensure ROOT is installed and accessible in your environment.

- Python 3.9 or later (optional): For Python integration.

Steps to Build with CMake

1. Clone the Repository

Navigate to the desired installation directory and clone the repository:

cd /path/to/installation

git clone https://github.com/CRIB-project/TSrim.git

cd TSrim

To use this library with Python, clone the python_develop branch:

git switch python_develop

Configure the Build

Create a build directory and configure the build with CMake.

You can specify a custom installation directory using -DCMAKE_INSTALL_PREFIX:

mkdir build

cd build

cmake -DCMAKE_INSTALL_PREFIX=../install ..

If no directory is specified, the default installation path is /usr/local.

Compile and Install

Build and install the library:

cmake --build . --target install -- -j4

- Adjust the

-joption based on the number of CPU cores available (e.g.,-j8for 8 cores) to speed up the build process.

Uninstallation

To remove TSrim, run one of the following commands from the build directory:

make uninstall

or

cmake --build . --target uninstall

Usage in Other CMake Projects

TSrim supports CMake's find_package functionality. To link TSrim to your project, add the following to your CMakeLists.txt:

find_package(TSrim REQUIRED)

target_link_libraries(${TARGET_NAME} TSrim::Srim)

Additional Resources

Mount Setting (option)

In many experimental setups, tasks are often distributed across multiple servers, such as:

- DAQ Server: Handles the DAQ process.

- File Server: Stores experimental data.

- Analysis Server: Performs data analysis.

To simplify workflows, NFS (Network File System) can be used to allow the analysis server to access data directly from the file server without duplicating files. Additionally, external storage devices can be mounted for offline analysis to store data or generated ROOT files.

Configuring the File Server for NFS

Step 1: Install NFS Server Utilities

sudo apt update

sudo apt install nfs-kernel-server

Step 2: Configure Shared Directories in /etc/exports

-

Edit the

/etc/exportsfile:sudo vi /etc/exports -

Add an entry for the directory to share:

/path/to/shared/data <client_ip>(ro,sync,subtree_check)- Replace

/path/to/shared/datawith the directory you want to share. - Replace

<client_ip>with the IP address or subnet (e.g.,192.168.1.*).

- Replace

-

Common options in

/etc/exportsOption Description rwAllows read and write access. ro(default)Allows read-only access. sync(default)Commits changes to disk before notifying the client. This ensures data integrity but may slightly reduce speed. asyncAllows the server to reply to requests before changes are committed to disk. This improves speed but risks data corruption in case of failure. subtree_check(default)Ensures proper permissions for subdirectories but may reduce performance. no_subtree_checkDisables subtree checks for better performance but reduces strict access control. wdelay(default)Delays disk writes to combine operations for better performance. Improves performance but increases the risk of data loss during failures. no_wdelayDisables delays for immediate write operations, reducing risk of data loss but potentially decreasing performance. hidePrevents overlapping mounts from being visible to clients. Enhances security by hiding overlapping mounts. nohideAllows visibility of overlapping mounts. Useful for nested exports but can lead to confusion. root_squash(default)Maps the root user of the client to a non-privileged user on the server. Improves security by preventing root-level changes. no_root_squashAllows the root user of the client to have root-level access on the server. This is not recommended unless absolutely necessary. all_squashMaps all client users to a single anonymous user on the server. Useful for shared directories with limited permissions. -

Save and exit the editor.

Step 3: Apply Changes and Start NFS Server

sudo exportfs -a

sudo systemctl enable nfs-server

sudo systemctl start nfs-server

Configuring the Analysis Server for Mounting

1. Mounting a Shared Directory via NFS

Step 1: Install NFS Utilities:

sudo apt update

sudo apt install nfs-common

Step 2: Create a Mount Point:

sudo mkdir -p /mnt/data

Step 3: Configure Persistent Mounting:

sudo vi /etc/fstab

Add:

<file_server_ip>:/path/to/shared/data /mnt/data nfs defaults 0 0

Step 4: Apply and Verify:

sudo mount -a

df -h

2. Mounting External Storage (e.g., USB or HDD)

Step 1: Identify the Device:

lsblk

- Look for the device name (e.g.,

/dev/sdb1) in the output.

Step 2: Create a Mount Point:

sudo mkdir -p /mnt/external

Step 3: Configure Persistent Mounting:

sudo vi /etc/fstab

Add:

/dev/sdb1 /mnt/external ext4 defaults 0 0

- Replace

/dev/sdb1with the actual device name. - Replace

ext4with the correct filesystem type (e.g.,ext4,xfs,vfat).

Step 4: Apply and Verify:

sudo mount -a

df -h

Troubleshooting

-

File Server Issues:

- Ensure the NFS service is running on the file server:

sudo systemctl status nfs-server- Verify the export list:

showmount -e -

Analysis Server Issues:

- Check the NFS mount status:

sudo mount -v /mnt/data- Verify network connectivity between the analysis server and file server.

-

External Storage Issues:

- Unmount safety:

sudo umount /mnt/external- Formatting uninitialized Storage:

sudo mkfs.ext4 /dev/sdb1- Use UUIDs for reliable mounting to avoid issues with device naming (e.g.,

/dev/sdb1):

sudo blkid /dev/sdb1Add to

/etc/fstab:UUID=your-uuid-here /mnt/external ext4 defaults 0 0

Example Configuration

File Server (/etc/exports)

/data/shared 192.168.1.101(rw,sync,no_subtree_check)

/data/backup 192.168.1.102(ro,async,hide)

Analysis Server (/etc/fstab)

192.168.1.100:/data/shared /mnt/data nfs defaults 0 0

UUID=abc123-4567-89def /mnt/external ext4 defaults 0 0

Art_analysis

When using artemis, it is customary to create a directory named art_analysis in your $HOME directory to organize and perform all analysis tasks.

This section explains how to set up the art_analysis directory structure and configure the required shell scripts.

Initialize the Directory Structure

Run the following command to create the directory structure and download the necessary shell scripts:

curl -fsSL --proto '=https' --tlsv1.2 https://crib-project.github.io/artemis_crib/scripts/init.sh | sh

This script will:

- Create the

art_analysisdirectory in$HOMEif it does not already exist. - Set up subdirectories and shell scripts in

art_analysis/bin. - Automatically assign the appropriate permissions to all scripts.

If the art_analysis directory already exists, the script will make no changes.

Directory Structure Overview

After running the script, the art_analysis directory will be organized as follows:

art_analysis/

├── bin/

│ ├── art_setting

│ ├── artnew

│ ├── artup

├── .conf/

│ ├── artlogin.sh

bin/: Contains shell scripts used for various analysis tasks..conf/: Reserved for configuration files.

Configuring the PATH and Loading art_setting

To use the scripts in art_analysis/bin globally, add the directory to your PATH environment variable.

-

Edit your shell configuration file (e.g.,

.bashrcor.zshrc) and add the following line:export PATH="$HOME/art_analysis/bin:$PATH" -

Apply the changes:

source ~/.zshrc # or source ~/.bashrc -

Verify the configuration:

which art_settingThe output should point to

~/art_analysis/bin/art_setting.

Automatically Loading art_setting

The art_setting script defines several functions to simplify analysis tasks using artemis.

To make these functions available in every shell session, add the following line to your shell configuration file:

source $HOME/art_analysis/bin/art_setting

Apply the changes:

source ~/.zshrc # or source ~/.bashrc

Overview of Scripts

The following scripts are included in art_analysis/bin:

art_setting: Sets up functions for the analysis environment.artnew: Creates directories and files for new analysis sessions.artup: Updates the shell scripts and settings.artlogin.sh: Configures individual analysis environments and automatically loads environment variables.

Example Shell Configuration (e.g., .zshrc)

Below is an example of a complete .zshrc configuration file.

It includes all the settings required for artemis and related tools, ensuring proper initialization in each shell session.

# Activate the global uv virtual environment

if [[ -d "$HOME/.venv" ]]; then

source "$HOME/.venv/bin/activate"

fi

# artemis configuration

if [[ -d "$HOME/art_analysis" ]]; then

# ROOT

source <root_installdir>/bin/thisroot.sh >/dev/null 2>&1

# TSrim (if needed)

source <tsrim_installdir>/bin/thisTSrim.sh >/dev/null 2>&1

# artemis

source <artemis_installdir>/install/bin/thisartemis.sh >/dev/null 2>&1

export EXP_NAME="exp_name"

export EXP_NAME_OLD="exp_old_name"

# Add art_analysis/bin to PATH

export PATH="$HOME/art_analysis/bin:$PATH"

# Load artemis functions

source "$HOME/art_analysis/bin/art_setting"

fi

Notes

- Replace

<root_installdir>,<tsrim_installdir>, and<artemis_installdir>with the actual paths on your system. - Set appropriate values for

EXP_NAMEandEXP_NAME_OLDbased on your experiment settings. These are explained in the next section: Make New Experiment.

Docker (option)

We might provide a Docker image in the future.

Please wait for a new information!

General Usages

This chapter outlines the essential steps for setting up and managing data analysis using artemis.

Each section focuses on key components of the workflow:

-

Explain how to initialize a new experiment environment with the necessary directory structure and configurations.

-

Set up individual working directories for each user to facilitate collaborative analysis.

-

Understand the basic

artemiscommands for logging in, running event loops, and visualizing data using example code. -

Configure a VNC server to display graphical outputs when connected via SSH, including steps for remote access using SSH port forwarding.

-

Define analysis workflows using steering files in YAML format, covering variable replacement, processor configuration, and file inclusion.

-

Explain how to define and group histograms for quick data visualization.

Make New Experiments

This guide explains how to set up the environment for a new experiment.

At CRIB, we use a shared user for experiments and organize them within the art_analysis directory.

The typical directory structure looks like this1:

~/art_analysis/

├── exp_name1/

│ ├── exp_name1/ # default (shared) user

│ ...

│ └── okawak/ # individual user

├── exp_name2/

│ ├── exp_name2/ # default (shared) user

│ ...

│ └── okawak/ # individual user

├── bin/

├── .conf/

│ ├── exp_name1.sh

│ ├── exp_name2.sh

│ ...

Different organizations may follow their own conventions. At CRIB, this directory structure is assumed.

Steps to Set Up a New Experiment

1. Start Setup with artnew

Run the following command to begin the setup process:

artnew

This command will guide you interactively through the configuration process.

2. Input Experimental Name

When prompted:

Input experimental name:

Enter a unique name for your experiment. This name will be used to create directories and configuration files. Choose something meaningful to identify the experiment.

3. Input Base Repository Path or URL

Next, you will see:

Input base repository path or URL:

Specify the Git repository for artemis_crib or your custom source. By default, the GitHub repository is cloned to create a new analysis environment. If you’ve prepared a different working directory, enter its path.

Note: CRIB servers support SSH cloning. For personal environments without SSH key registration, use HTTPS.

4. Input Raw Data Directory Path

Provide the path where your experiment’s binary data (e.g., .ridf files) is stored:

Input rawdata directory path:

The system creates a symbolic link named ridf in the working directory, pointing to the specified path.

If needed, you can adjust this link manually after setup.

5. Input Output Data Directory Path

Next, specify the directory for storing output data:

Input output data directory path:

A symbolic link named output will point to this directory.

If you prefer to store files directly in the output directory of your working environment,

you can manually modify the configuration after setup.

6. Choose Repository Setup Option

Finally, decide how to manage the Git repository:

Select an option for repository setup:

1. Create a new repository locally.

2. Clone default branch and create a new branch.

3. Use the repository as is. (for developers)

- Option 1: Creates a local repository. Use this if all work will remain local.

- Option 2: Clones the default branch from GitHub and creates a new branch for the experiment.

- Option 3: Uses the main branch as-is. This option is recommended for developers.

Verifying the Configuration

After completing the setup, the configuration file will be saved in art_analysis/.conf/exp_name.sh.

Update your shell configuration to include the experiment name:

vi .zshrc # or .bashrc

Add the following line:

export EXP_NAME="exp_name"

Reload the shell configuration:

source .zshrc

Using artlogin

Run the following command to set up the working directories (next section):

artlogin

If you encounter the following error:

artlogin: Environment for 'exp_name' not found. Create it using 'artnew' command.

Check the following:

- Ensure

art_analysis/.conf/exp_name.shwas created successfully. - Verify that

EXP_NAMEin your shell configuration (.zshrcor.bashrc) is correct and loaded.

Make New Users

This section explains how to create working directories for individual users.

With the overall structure now prepared, you are ready to start using artemis_crib!

Steps

1. Run artlogin

To add a new user (working directory), use the artlogin command.

If no arguments are provided, the directory corresponding to the EXP_NAME specified in .zshrc or .bashrc will be created.

artlogin username

- If the

usernamedirectory already exists, the necessary environment variables will be loaded, and you will be moved to that directory. - If it does not exist, you will be prompted to enter configuration details interactively.

Note: The

artlogin2command is also available. Unlikeartlogin,artlogin2usesEXP_NAME_OLDas the environment variable. This is useful for analyzing data from a past experiment while keeping the current experiment name inEXP_NAME. Replaceartloginwithartlogin2as needed.

2. Interactive Configuration

When creating a new user, you will be prompted as follows.

If you ran the command by mistake, type n to exit.

Create new user? (y/n):

If you type y, the setup continues.

Next, you will be asked to provide your name and email address. This information is used by Git to track changes made by the user:

Input full name:

Input email address:

The repository will then be cloned, and symbolic links (e.g., ridf, output, rootfile) specified during the artnew command setup will be created.

You will automatically move to the new working directory.

3. Build the Source Code

The CRIB-related source code is located in the working directory and must be built before use. Follow the standard CMake build process:

mkdir build

cd build

cmake ..

make -j4 # Adjust the number of cores as needed

make install

cd ..

When running cmake, a thisartemis-crib.sh file will be created in the working directory.

This file is used to load environment variables.

While the artlogin command loads it automatically, for the initial setup, run the following commands manually or rerun artlogin:

artlogin username

or

source thisartemis-crib.sh

Useful Commands

acd

The acd command is an alias defined after running artlogin.

It allows you to quickly navigate to the working directory.

acd='cd ${ARTEMIS_WORKDIR}'

a

The a command launches the artemis interpreter.

It only works in directories containing the artemislogon.C file and is defined in the art_setting shell script.

Example implementation:

a() {

# Check if 'artemislogon.C' exists in the current directory

if [ ! -e "artemislogon.C" ]; then

printf "\033[1ma\033[0m: 'artemislogon.C' not found\n"

return 1

fi

# Determine if the user is connected via SSH and adjust DISPLAY accordingly

if [ -z "${SSH_CONNECTION:-}" ]; then

# Not connected via SSH

artemis -l "$@"

elif [ -f ".vncdisplay" ]; then

# Connected via SSH and .vncdisplay exists

DISPLAY=":$(cat .vncdisplay)" artemis -l "$@"

else

# Connected via SSH without .vncdisplay

artemis -l "$@"

fi

}

Run a Example Code

This section provides a hands-on demonstration of how to use artemis_crib with an example code.

Step 1: Log In to the Working Directory

Log in to the user’s working directory using the artlogin command:

artlogin username

This command loads the necessary environment variables for the user.

Once logged in, you can start the artemis interpreter:

a

Note: If connected via SSH, ensure X11 forwarding is configured or use a VNC server to view the canvas. Refer to the VNC server sections for setup details.

When the artemis interpreter starts, you should see the prompt:

artemis [0]

If errors occur, verify that the source code has been built and that the thisartemis-crib.sh file has been sourced.

Step 2: Load the Example Steering File

artemis uses a YAML-based steering file to define data and analysis settings.

For this example, use the steering file steering/example/example.tmpl.yaml.

Load the file with the add command with the path of the steering file:

artemis [] add steering/example/example.tmpl.yaml NUM=0001 MAX=10

- NUM: Used for file naming.

- MAX: Specifies the maximum value for a random number generator.

These arguments are defined in the steering file. Refer to the Steering files section.

Step 3: Run the Event Loop

Start the event loop using the resume command (or its abbreviation, res):

artemis [] res

Once the loop completes, you’ll see output like:

Info in <art::TTimerProcessor::PostLoop>: real = 0.02, cpu = 0.02 sec, total 10000 events, rate 500000.00 evts/sec

To pause the loop, use the suspend command (abbreviation: sus):

artemis [] sus

Step 4: View Histograms

Listing Histograms

Use the ls command to list available histograms:

artemis [] ls

Example output:

artemis

> 0 art::TTreeProjGroup test2 test (2)

1 art::TTreeProjGroup test test

2 art::TAnalysisInfo analysisInfo

The histograms are organized into directories represented by the art::TTreeProjGroup class.

This class serves as a container for multiple histograms, making it easier to manage related data.

Navigate to a histogram directory using its ID or name:

artemis [] cd 1

or:

artemis [] cd test

Once inside the directory, use the ls command to view its contents:

artemis [] ls

test

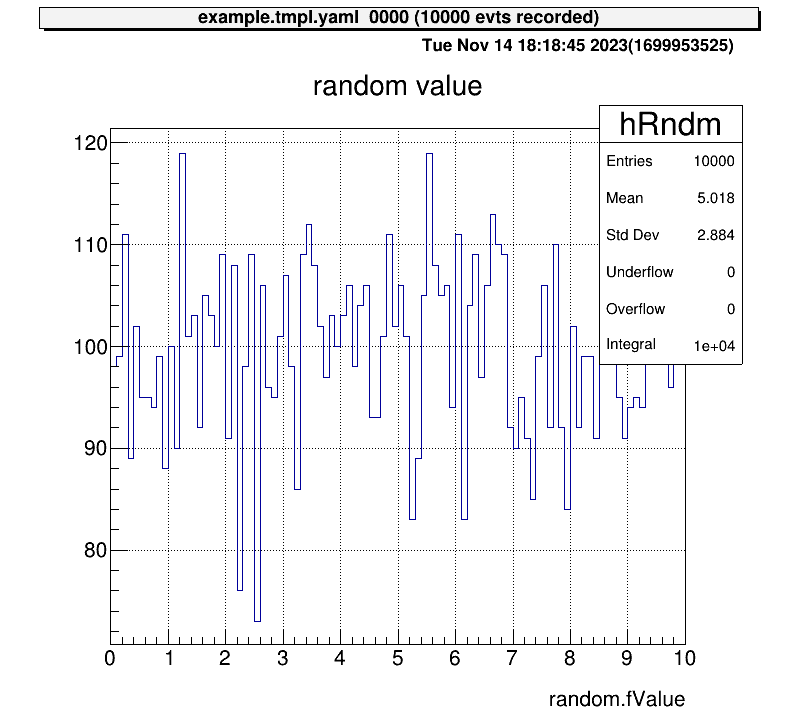

> 0 art::TH1FTreeProj hRndm random value

Here, art::TH1FTreeProj is a customized class derived from TH1F, designed for efficient analysis within artemis.

Drawing Histograms

Draw a histogram using the ht command with its ID or name:

artemis [] ht 0

To return to the root directory, use:

artemis [] cd

To move one directory up, use:

artemis [] cd ..

This moves you up one level in the directory structure.

Step 5: View Tree Data

After the event loop, a ROOT file containing tree objects is created.

List available files using fls:

artemis [] fls

files

0 TFile output/0001/example_0001.tree.root (CREATE)

Navigate into the ROOT file using fcd with the file ID:

artemis [] fcd 0

Listing Branches

List tree branches with branchinfo (or br):

artemis [] br

random art::TSimpleData

View details of a branch’s members and methods:

artemis [] br random

art::TSimpleData

Data Members

Methods

Bool_t CheckTObjectHashConsistency

TSimpleData& operator=

TSimpleData& operator=

See also

art::TSimpleDataBase<double>

To explore inherited classes, use classinfo (or cl):

artemis [] cl art::TSimpleDataBase<double>

art::TSimpleDataBase<double>

Data Members

double fValue

Methods

void SetValue

double GetValue

Bool_t CheckTObjectHashConsistency

TSimpleDataBase<double>& operator=

See also

art::TDataObject base class for data object

Drawing Data from Trees

Unlike standard ROOT files, data in artemis cannot be accessed directly through branch names.

Instead, use member variables or methods of the branch objects.

Example:

artemis [] tree->Draw("random.fValue")

artemis [] tree->Draw("random.GetValue()")

VNC Server (option)

When performing data analysis, direct access to the server is not always practical. Many users connect via SSH, which can complicate graphical displays. While alternatives such as X11 forwarding or saving images exist, VNC is often preferred due to its lightweight design and fast rendering capabilities. This section explains how to set up and use a VNC server for visualization.

Setting Up the VNC Server

To use VNC, a VNC server must be installed on the analysis server.

Popular options include TigerVNC and TightVNC.

For CRIB analysis servers, TightVNC is the chosen implementation.

Installing TightVNC

To install TightVNC on an Ubuntu machine, use the following commands:

sudo apt update

sudo apt install tightvncserver

On CRIB servers, VNC is used solely to render artemis canvases. No desktop environment is installed. For further details, refer to the official TightVNC documentation.

Starting the VNC Server

Start the VNC server with the following command:

vncserver :10

Here, :10 specifies the display number, and the VNC server will run on port 5910 (calculated as 5900 + display number).

export DISPLAY=:10

However, sometimes you may find that even though VNC is running, the plot you’re trying to display does not appear. In this case, you should check the DISPLAY settings on the analysis computer.

Checking Active VNC Servers

If multiple VNC processes are active, using an already occupied display number will cause an error.

Check active VNC processes with the vnclist command, defined as an alias on CRIB servers:

vnclist

Example output:

Xtightvnc :23

Xtightvnc :3

Alias definition:

vnclist: aliased to pgrep -a vnc | awk '{print $2,$3}'

Configuring artemis to Use VNC

To render artemis canvases on the VNC server, the DISPLAY environment variable must be set correctly.

The a command automates this process.

How the a Command Works

The a command is defined as follows:

a() {

if [ ! -e "artemislogon.C" ]; then

printf "\033[1ma\033[0m: 'artemislogon.C' not found\n"

return 1

fi

if [ -z "${SSH_CONNECTION:-}" ]; then

artemis -l "$@"

elif [ -f ".vncdisplay" ]; then

DISPLAY=":$(cat .vncdisplay)" artemis -l "$@"

else

artemis -l "$@"

fi

}

Explanation:

- If

artemislogon.Cis missing in the current directory, the command exits. - If not connected via SSH,

artemis -lruns using the local display. - If

.vncdisplayexists, its content is read to set theDISPLAYvariable before launchingartemis. - Otherwise,

artemis -lruns with default settings.

Configuring .vncdisplay

To direct artemis canvases to the VNC server:

- Create a

.vncdisplayfile in your working directory. - Add the display number (e.g.,

10) as its content:10 - Start

artemisusing theacommand. The canvas should now appear on the VNC server.

Configuring the VNC Client

To view canvases, you must connect to the VNC server using a VNC client. Popular options include RealVNC.

Connecting to the Server

If the client machine is on the same network as the server, connect using the server’s IP address (or hostname) and port number:

<analysis-server-ip>:5910

- The port number is

5900 + display number. - If prompted for a password, use the one set during the VNC server setup or contact the CRIB server administrator.

Using SSH Port Forwarding for Remote Access

When accessing the analysis server from an external network (e.g., from home), direct VNC connections are typically blocked. SSH port forwarding allows secure access in such cases.

flowchart LR;

A("**Local Machine**")-->B("**SSH server**")

B-->C("**Analysis Machine**")

C-->B

B-->A

Multi-Hop SSH Setup

If a gateway server is required for access, configure multi-hop SSH in your local machine’s .ssh/config file.

Example:

Host gateway

HostName <gateway-server-ip>

User <gateway-username>

IdentityFile <path-to-private-key>

ForwardAgent yes

Host analysis

HostName <analysis-server-ip>

User <analysis-username>

IdentityFile <path-to-private-key>

ForwardAgent yes

ProxyCommand ssh -CW %h:%p gateway

ServerAliveInterval 60

ServerAliveCountMax 5

With this configuration, connect to the analysis server using:

ssh analysis

Setting Up Port Forwarding

To forward the analysis server’s VNC port (e.g., 5910) to a local port (e.g., 59010):

- Use the

-Loption in your SSH command:ssh -L 59010:<analysis-server-ip>:5910 analysis - Alternatively, add the

LocalForwardoption to your.ssh/configfile:Host analysis LocalForward 59010 localhost:5910 - After connecting via SSH, start your VNC client and connect to:

localhost:59010

You should now see the artemis canvases rendered on your local machine.

Note: This is one example configuration. Customize the setup as needed for your environment.

Steering Files

Steering files define the configuration for the data analysis flow in YAML format.

This section explains their structure and usage using the example file steering/example/example.tmpl.yaml from the previous section.

Understanding the Steering File

The content of steering/example/example.tmpl.yaml is structured as follows:

Anchor:

- &treeout output/@NUM@/example_@NUM@.tree.root

- &histout output/@NUM@/example_@NUM@.hist.root

Processor:

- name: timer

type: art::TTimerProcessor

- include:

name: rndm.inc.yaml

replace:

MAX: @MAX@

- name: hist

type: art::TTreeProjectionProcessor

parameter:

FileName: hist/example/example.hist.yaml

OutputFilename: *histout

Type: art::TTreeProjection

Replace: |

MAX: @MAX@

- name: treeout

type: art::TOutputTreeProcessor

parameter:

FileName: *treeout

The file is divided into two main sections:

Anchor: Defines reusable variables using the YAML anchor feature (&name value), which can be referenced later as*name.Processor: Specifies the sequence of processing steps for the analysis.

Variables Enclosed in @

Variables such as @NUM@ and @MAX@ are placeholders replaced dynamically when loading the steering file via the artemis command:

artemis [] add steering/example/example.tmpl.yaml NUM=0001 MAX=10

For example, the Anchor section is replaced as follows:

Anchor:

- &treeout output/0001/example_0001.tree.root

- &histout output/0001/example_0001.hist.root

This allows the steering file to adapt dynamically to different analysis configurations.

Data Processing Flow

When artemis runs, the processors defined in the Processor section are executed sequentially.

For example, the first processor in example.tmpl.yaml is:

- name: timer

type: art::TTimerProcessor

Each processor entry requires the following keys:

name: A unique identifier for the process, aiding in debugging and logging.type: Specifies the processor class to use.parameter(optional): Defines additional parameters for the processor.

The general structure of any steering file is as follows:

- name: process1

type: art::THoge1Processor

parameter:

prm1: hoge

- name: process2

type: art::THoge2Processor

parameter:

prm2: hoge

- name: process3

type: art::THoge3Processor

parameter:

prm3: hoge

Processors are executed in the order they appear in the file.

Referencing Other Steering Files

For modular or repetitive configurations, other steering files can be included using the include keyword.

For example:

- include:

name: rndm.inc.yaml

replace:

MAX: @MAX@

Content of rndm.inc.yaml

Processor:

- name: MyTRandomNumberEventStore

type: art::TRandomNumberEventStore

parameter:

Max: @MAX@ # [Float_t] the maximum value

MaxLoop: 10000 # [Int_t] the maximum number of loops

Min: 0 # [Float_t] the minimum value

OutputCollection: random # [TString] output name of random values

OutputTransparency: 0 # [Bool_t] Output is persistent if false (default)

Verbose: 1 # [Int_t] verbose level (default 1: non-quiet)

The flow of variable replacement is as follows:

MAX=10is passed via theartemiscommand.@MAX@inexample.tmpl.yamlis replaced with10.- The

replacedirective inexample.tmpl.yamlpropagates this value torndm.inc.yaml. @MAX@inrndm.inc.yamlis replaced with10.

Other Processing Steps

The remaining processors in the file handle histogram generation and saving data to a ROOT file:

- name: hist

type: art::TTreeProjectionProcessor

parameter:

FileName: hist/example/example.hist.yaml

OutputFilename: *histout

Type: art::TTreeProjection

Replace: |

MAX: @MAX@

- name: treeout

type: art::TOutputTreeProcessor

parameter:

FileName: *treeout

- Histogram generation is detailed in the next section.

- Output tree processing saves data to a ROOT file, utilizing aliases (

*treeout) defined in theAnchorsection.

General Structure of a Steering File

Steering files typically include the following processors:

- Timer: Measures processing time but does not affect data analysis.

- EventStore: Handles event information for loops.

- Mapping: Maps raw data to detectors.

- Processing steps: Performs specific data analysis tasks.

- Histogram: Generates histograms from processed data.

- Output: Saves processed data in ROOT file format.

flowchart LR

A("**EventStore**") --> B("**Mapping**<br>(Optional)")

B --> C("**Processing Steps**<br>(e.g., Calibration)")

C --> D("**Histogram**<br>(Optional)")

D --> E("**Output**")

E -.-> |Loop| A

Summary

- Steering files define the analysis flow using YAML syntax.

- Dynamic variables enclosed in

@are replaced with command-line arguments. - The

Processorsection specifies the sequence of processing tasks. - Use

includeto reference other steering files for modular configurations. - Typical components include timers, data stores, mappings, processing steps, histograms, and output.

Histogram Definition

In the previous section, we introduced the structure of the steering file, including a processor for drawing histograms. In online analysis, quickly displaying predefined histograms is essential. This section explains how histograms are defined and managed.

Steering File Block

To process histograms, the art::TTreeProjectionProcessor is used (unless a custom processor has been created for CRIB).

Below is an example from steering/example/example.tmpl.yaml:

Processor:

# skip

- name: hist

type: art::TTreeProjectionProcessor

parameter:

FileName: hist/example/example.hist.yaml

OutputFilename: *histout

Type: art::TTreeProjection

Replace: |

MAX: @MAX@

Key Parameters

- FileName: Points to the file with histogram definitions.

- OutputFilename: Specifies where the ROOT file containing the histogram objects will be saved. The YAML alias

*histoutis used here. - Type: Defines the processing class, which should be

art::TTreeProjectionfor histograms processed byart::TTreeProjectionProcessor. - Replace: Substitutes placeholders (e.g.,

@MAX@) in the histogram definition file with specified values.

Note: YAML's

|symbol ensures that line breaks are included as written. Though not critical in this case, it impacts multi-line text handling.

Histogram Definition File

The histogram definitions are stored in a separate file.

For instance, hist/example/example.hist.yaml contains:

group:

- name: test

title: test

contents:

- name: hRndm

title: random value

x: ["random.fValue",100,0.,@MAX@]

include:

- name: hist/example/example.inc.yaml

replace:

MAX: @MAX@

SUFFIX: 2

BRANCH: random

The file is divided into two main blocks: group and include.

group Block

The group block organizes histograms into logical units.

Each group corresponds to an art::TTreeProjGroup object, which is referenced in the artemis command section:

artemis [] ls

artemis

> 0 art::TTreeProjGroup test2 test (2)

1 art::TTreeProjGroup test test

2 art::TAnalysisInfo analysisInfo

The name and title keys in the group block define the art::TTreeProjGroup object:

group:

- name: test

title: test

Defining Histogram Contents

Histograms within a group are defined under the contents key. Multiple histograms can be defined as an array. For example:

# skip

contents:

- name: hRndm

title: random value

x: ["random.fValue",100,0.,@MAX@]

# you can add histograms here

#- name: hRndm2

Key Parameters

| Key | Description |

|---|---|

| name | The histogram's unique identifier. |

| title | Display title for the histogram. |

| x | Defines the x-axis. Format: [variable, bin count, min, max]. |

| y | Defines the y-axis (if specified, creates a 2D histogram). |

| cut | Filter condition for the histogram, often referred to as a "cut" or "gate". |

variable in Histogram Definitions

Histograms generated by art::TTreeProjectionProcessor are created based on tree objects, similar to the ROOT command:

root [] tree->Draw("variable>>(100, -10.0, 10.0)", "variable2 > 1.0")

In this case:

- x:

["variable", 100, -10.0, 10.0] - cut:

"variable2 > 1.0;"

In artemis, data is accessed through the member variables or methods of branch objects rather than directly referencing branch names.

include Block

Histogram definition files can reference other files using the include keyword:

include:

- name: hist/example/example.inc.yaml

replace:

MAX: @MAX@

SUFFIX: 2

BRANCH: random

- name: Specifies the path to the included file relative to the working directory.

- replace: Replaces placeholders in the included file with specified values.

Example of the referenced file hist/example/example.inc.yaml:

group:

- name: test@SUFFIX@

title: test (@SUFFIX@)

contents:

- name: hRndm@SUFFIX@

title: random number

x: ["@BRANCH@.fValue",100, 0., @MAX@]

The structure of the included file mirrors that of the main file. Conceptually, the included content is appended to the main file.

While the example code demonstrates referencing multiple files, overuse can lead to complexity. Reference files only when it simplifies management.

Summary

Histograms in artemis are defined through a combination of steering files and separate histogram definition files.

The art::TTreeProjectionProcessor processes these definitions, enabling efficient creation and display of histograms during analysis.

Key points:

- The steering file specifies the histogram processor and its parameters.

- Histogram definition files use

groupblocks to logically organize histograms and include key parameters likex,y, andcut. - External files can be included for reusability, but excessive inclusion should be avoided for clarity.

Preparation

Note: In CRIB analysis, processors introduced in this Chapter may be customized. they are discussed in the CRIB Chapter. Currently,

artemisitself was modified and rebuilt, but there is a possibility of developing equivalent processors within theart::cribnamespace in the future. If that occurs, this manual will need to be updated (okawak expected someone to do so).

Map and Seg Configuration

This section explains the necessary configurations for analyzing actual data. The structure of the data files assumes the RIDF (RIKEN Data File) format, commonly used in DAQ systems at RIKEN. Here, we focus on two essential configuration files for extracting and interpreting data: the map file and the segment file.

The Role of Configuration Files

In artemis, input data is stored in an object called the EventStore.

To process RIDF files, the class TRIDFEventStore is used.

Configuration files map the raw data in TRIDFEventStore to the corresponding DAQ modules and detectors, enabling accurate interpretation.

Segment Files

Segment files are used to read raw ADC or TDC data. When the map file is not properly configured, segment files help verify whether data exists in each channel.

Segment files are located in the conf/seg directory within your working directory.

The directory structure should look like this:

./

├── conf/

│ ├── map/

│ ├── seg/

│ │ ├── modulelist.yaml

│ │ ├── seglist.yaml

modulelist.yaml

The modulelist.yaml file defines the modules used in the analysis.

Each module corresponds to a DAQ hardware device, such as ADCs or TDCs.

Example: Configuration for a V1190A module

V1190A:

id: 24

ch: 128

values:

- tdcL: [300, -5000., 300000.] # Leading edge

- tdcT: [300, -5000., 300000.] # Trailing edge

- V1190A: Module name, used in

seglist.yaml. - id: Module ID, defined in

TModuleDecoder(V1190 example) - ch: Total number of channels. For example, the V1190A module has 128 channels.

- values: Histogram parameters in the format

[bin_num, x_min, x_max]. These are used when checking raw data (see Raw data check).

seglist.yaml

The seglist.yaml file describes the settings of each module.

In the CRIB DAQ system (babirl), modules are identified using four IDs: dev, fp, mod, and geo.

These IDs are assigned during DAQ setup and are written into the RIDF data file.

ID Descriptions

| ID | Description | CRIB example |

|---|---|---|

| dev | Device ID: Distinguishes major groups (e.g., experiments). | CRIB modules use 12, BIGRIPS and SHARAQ use others. |

| fp | Focal Plane ID: Differentiates sections of the DAQ system or crates. | Common DAQ is used for CRIB focal planes, so this ID is used to differentiate MPV crates. |

| mod | Module ID: Identifies the purpose of the module (e.g., PPAC, PLA). | 6 for ADCs, 7 for TDCs, 63 for scalers. |

| geo | Geometry ID: Distinguishes individual modules with the same [dev, fp, mod] configuration. | Unique ID for each module. |

Example: Configuration for a V1190A module in seglist.yaml

J1_V1190:

segid: [12, 2, 7]

type: V1190A

modules:

- id: 0

- id: 1

- J1_V1190: A descriptive name for the module, used when creating histograms or trees.

- segid: Represents [dev, fp, mod] values.

- type: Specifies the module type, as defined in

modulelist.yaml. - modules: Lists geometry IDs (geo). Multiple IDs can be specified as an array.

Map Files

Map files consist of a main configuration file (mapper.conf) and individual map files stored in the conf/map directory.

./

├── mapper.conf

├── conf/

│ ├── map/

│ │ ├── ppac/

│ │ │ ├── dlppac.map

│ │ ...

│ ├── seg/

mapper.conf

This file specifies which map files to load and their configuration. Each line in the file indicates the path to a map file relative to the working directory and the number of columns of the segid in the file. Example configuration:

# file path for configuration, relative to the working directory

# (path to the map file) (Number of columns)

#====================================

# bld

# cid = 1: rf

conf/map/rf/rf.map 1

# cid = 2: coin

conf/map/coin/coin.map 1

# cid = 3: f1ppac

conf/map/ppac/f1ppac.map 5

# cid = 4: dlppac

conf/map/ppac/dlppac.map 5

Format of the mapper.conf

- Path: Specifies the relative path to the map file.

- Number of Columns: Indicates the number of segments defined in the map file.

Individual Map Files

Each map file maps DAQ data segments to detector inputs. The general format is:

cid, id, [dev, fp, mod, geo, ch], [dev, fp, mod, geo, ch], ...

ID description

| ID | Description |

|---|---|

catid or cid | Category ID: Groups data for specific analysis. |

detid or id | Detector ID: Differentiates datasets within a category. It corresponds to the row of map files. |

[dev, fp, mod, geo, ch] | Represents segment IDs and channels (segid). |

typeid | Index of the segment IDs. The first set of segid is correspond to 0. |

Example for dlppac.map:

# map for dlppac

#

# Map: X1 X2 Y1 Y2 A

#

#--------------------------------------------------------------------

# F3PPACb

4, 0, 12, 2, 7, 0, 4, 12, 2, 7, 0, 5, 12, 2, 7, 0, 6, 12, 2, 7, 0, 7, 12, 2, 7, 0, 9

# F3PPACa

4, 1, 12, 0, 7, 16, 1, 12, 0, 7, 16, 2, 12, 0, 7, 16, 3, 12, 0, 7, 16, 4, 12, 0, 7, 16, 0

In this example:

catid = 4: Indicates the PPAC category.detid = 0, 1: Identifies the specific data set within this category.- Five

[dev, fp, mod, geo, ch]combinations: Define the five input channels (X1, X2, Y1, Y2, A). typeid = 0corresponds X1,typeid = 1corresponds X2, and so on.

Relation to mapper.conf

The number of [dev, fp, mod, geo, ch] combinations in a map file determines the column count in mapper.conf.

For example:

conf/map/ppac/dlppac.map 5

Verifying the Mapping (Optional)

To ensure correctness, use the Python script pyscripts/map_checker.py:

python pyscripts/map_checker.py

For uv environments:

uv run pyscripts/map_checker.py

Summary

- Segment files: Define hardware modules and verify raw data inputs (refer to Raw Data Check).

- Map files: Map DAQ data to detectors and analysis inputs. Verify with

map_checker.py.

Read RIDF Files

This section explains how to read RIDF files using artemis.

Currently, binary RIDF files are processed using two classes: art::TRIDFEventStore and art::TMappingProcessor.

Using art::TRIDFEventStore to Read Data

To load data from a RIDF file, use art::TRIDFEventStore.

Here is an example of a steering file:

Anchor:

- &input ridf/@NAME@@NUM@.ridf

- &output output/@NAME@/@NUM@/hoge@NAME@@NUM@.root

- &histout output/@NAME@/@NUM@/hoge@NAME@@NUM@.hist.root

Processor:

- name: timer

type: art::TTimerProcessor

- name: ridf

type: art::TRIDFEventStore

parameter:

OutputTransparency: 1

Verbose: 1

MaxEventNum: 100000

SHMID: 0

InputFiles:

- *input

- name: outputtree

type: art::TOutputTreeProcessor

parameter:

FileName:

- *output

The timer processor shows analysis time and is commonly included.

The key section to note is the ridf block.

Key Parameters

| Parameter | Defalut Value | Description |

|---|---|---|

| OutputTransparency | 0 | 0 saves output to a ROOT file, 1 keeps it for internal use only. (Inherited from art::TProcessor.) |

| Verbose | 1 | 0 for quiet mode, 1 for detailed logs. (Inherited from art::TProcessor.) |

| MaxEventNum | 0 | 0 for no limit; otherwise specifies the number of entries to process. |

| SHMID | 0 | Shared Memory ID for DAQ in online mode (babirl nssta mode). |

| InputFiles | Empty array | List of input RIDF file paths. Files are processed sequentially into a single ROOT file. |

Unspecified parameters use default values.

Parameters inherited from art::TProcessor are common to all processors.

Processing with art::TRIDFEventStore

The objects processed by this processor are difficult to handle directly.

It is common to set OutputTransparency to 1, meaning the objects will not be saved to a ROOT file.

To understand what is produced, you can set OutputTransparency to 0 to examine the output.

artlogin <username>

a

artemis [] add steering/hoge.yaml NAME=xxxx NUM=xxxx

artemis [] res

artemis [] sus

artemis [] fcd 0

artemis [] br

For detailed commands, refer to the Artemis Commands section.

Example output:

segdata art::TSegmentedData

eventheader art::TEventHeader

The eventheader is always output, while segdata is produced when OutputTransparency is set to 0.

Key data is contained in segdata.

Further details are covered in subsequent sections.

Using art::TMappingProcessor for Data Mapping

Raw RIDF files do not inherently indicate detector associations or processing rules. Use mapping files, as explained in the previous section, to map the data.

Example steering file:

Processor:

- name: timer

type: art::TTimerProcessor

- name: ridf

type: art::TRIDFEventStore

parameter:

OutputTransparency: 1

Verbose: 1

MaxEventNum: 100000

SHMID: 0

InputFiles:

- *input

- name: mapper

type: art::TMappingProcessor

parameter:

OutputTransparency: 1

MapConfig: mapper.conf

- name: outputtree

type: art::TOutputTreeProcessor

parameter:

FileName:

- *output

Key Parameters

| Parameter | Defalut Value | Description |

|---|---|---|

| MapConfig | mapper.conf | Path to the mapper configuration file. Defaults to mapper.conf in the working directory. |

This parameter allows custom mappings, such as focusing on specific data during standard analyses.

Use an alternative mapper.conf in another directory and specify its path when needed.

Processing with art::TMappingProcessor

The outputs of this processor are also hard to use directly, so OutputTransparency is typically set to 1.

To examine what is produced, set it to 0 and observe the output.

artlogin <username>

a

artemis [] add steering/hoge.yaml NAME=xxxx NUM=xxxx

artemis [] res

artemis [] sus

artemis [] fcd 0

artemis [] br

Example output:

segdata art::TSegmentedData

eventheader art::TEventHeader

catdata art::TCategorizedData

A new branch, catdata, is created.

It categorizes data from segdata and serves as the basis for detector-specific analyses.

Workflow Diagram

flowchart LR

A("**RIDF data files**") --> B("<u>**art::TRIDFEventStore**</u><br>input: RIDF files<br>output: segdata")

B --> C("<u>**art::TMappingProcessor**</u><br>input: segdata<br>output: catdata")

C --> D("**<u>Mapping Processor</u>**<br>input: catdata<br>output: hoge")

D --> E("**<u>Other Processors</u>**<br>input: hoge<br>output: fuga")

C --> F("**<u>Mapping Processor</u>**<br>input: catdata<br>output: foo")

F --> G("**<u>Other Processors</u>**<br>input: foo<br>output: bar")

Both segdata and catdata are typically set to OutputTransparency: 1 and processed internally.

Understanding these objects is essential for mastering subsequent analyses.

Time Reference for V1190

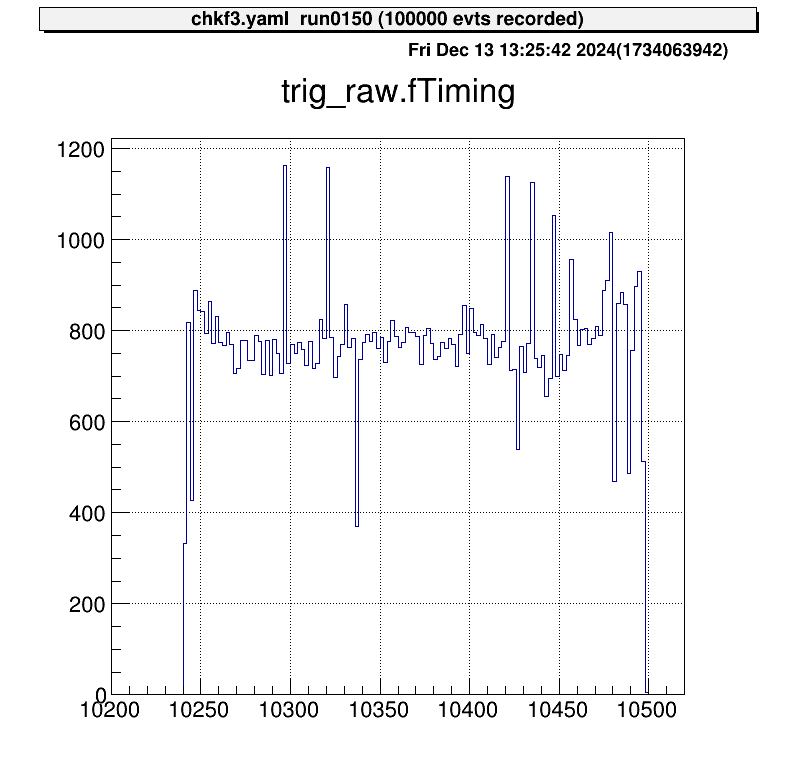

At CRIB, the CAEN V1190 module is used to acquire timing data in Trigger Matching Mode, where timing data within a specified window is recorded upon receiving a trigger signal.

The raw data from the module includes module-specific uncertainties. To ensure accurate timing, corrections are required. This section explains how to apply these corrections.

Raw Data

To understand the behavior of the V1190, consider the following setup:

flowchart LR

subgraph V1190

direction TB

B("**Module**")

C("Channels")

end

A("**Trigger Making**")-->|trigger|B

A-->|data|C

A-->|tref|C

- The trigger signal is input directly to the V1190, and timing data is recorded using two channels.

- The recorded data is referred to as

dataandtref.

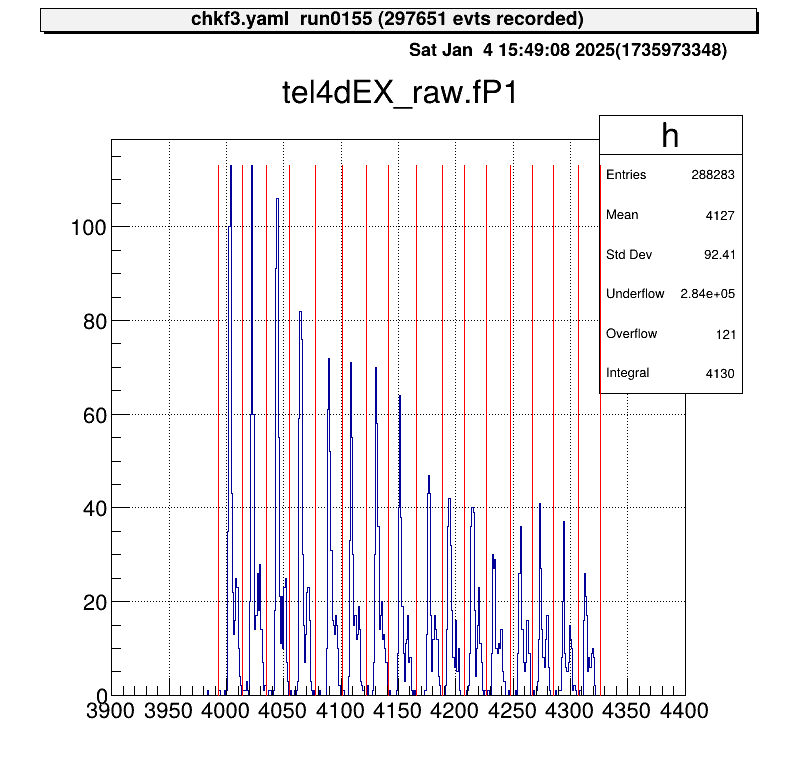

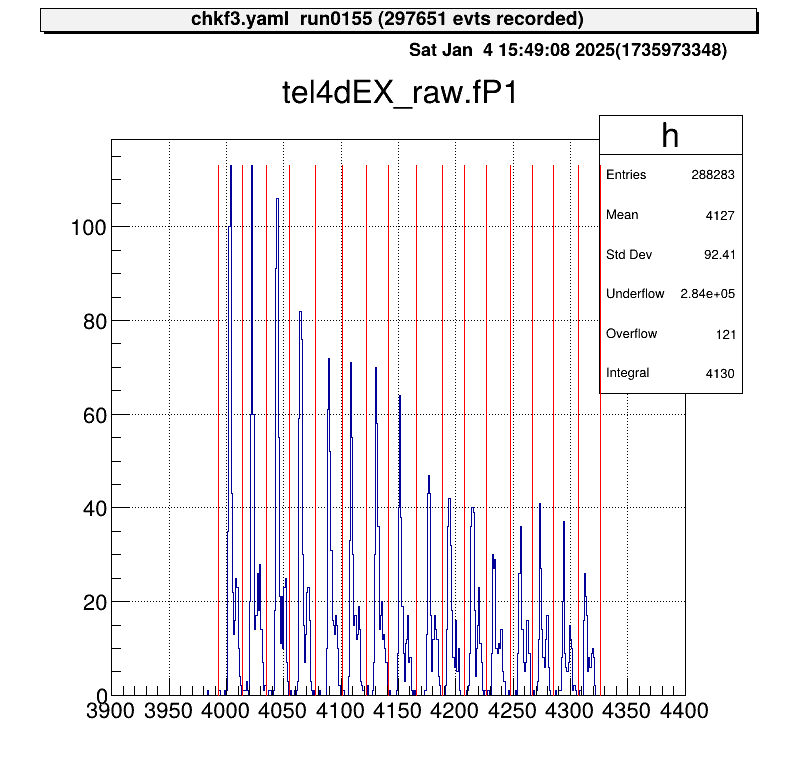

When data is examined without corrections, the result looks like this:

The horizontal axis represents the V1190 channel numbers. In this example, one channel corresponds to approximately 0.1 ns, leading to an uncertainty of about 25 ns. Ideally, since this signal is a trigger, data points should align at nearly the same channel.

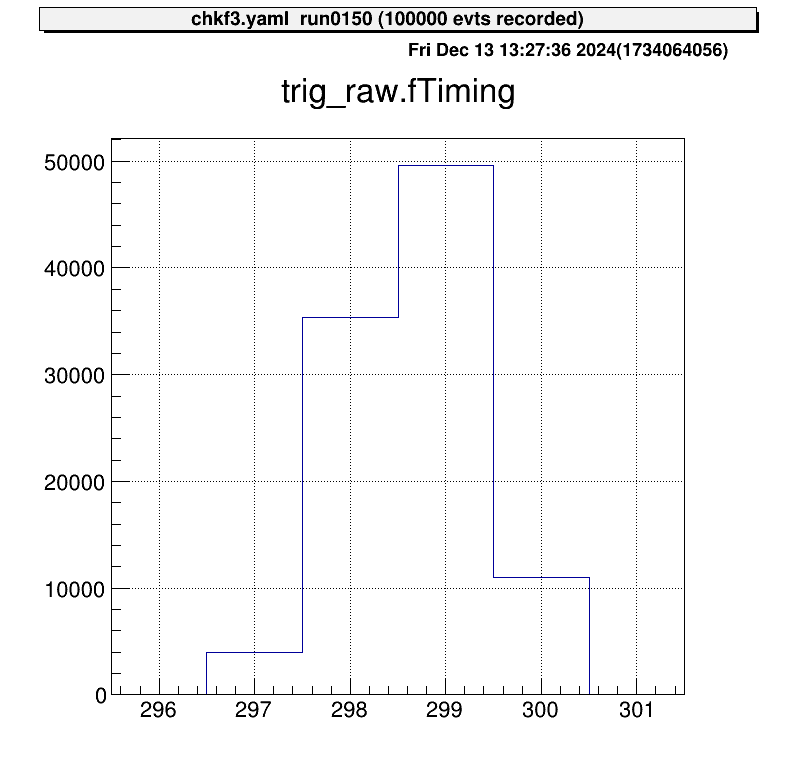

Correction Using "Tref"

This uncertainty is consistent across all data recorded by the V1190 for the same event, meaning it is fully correlated across channels.

By subtracting a reference timing value (called tref) from all channels, this module-specific uncertainty can be corrected.

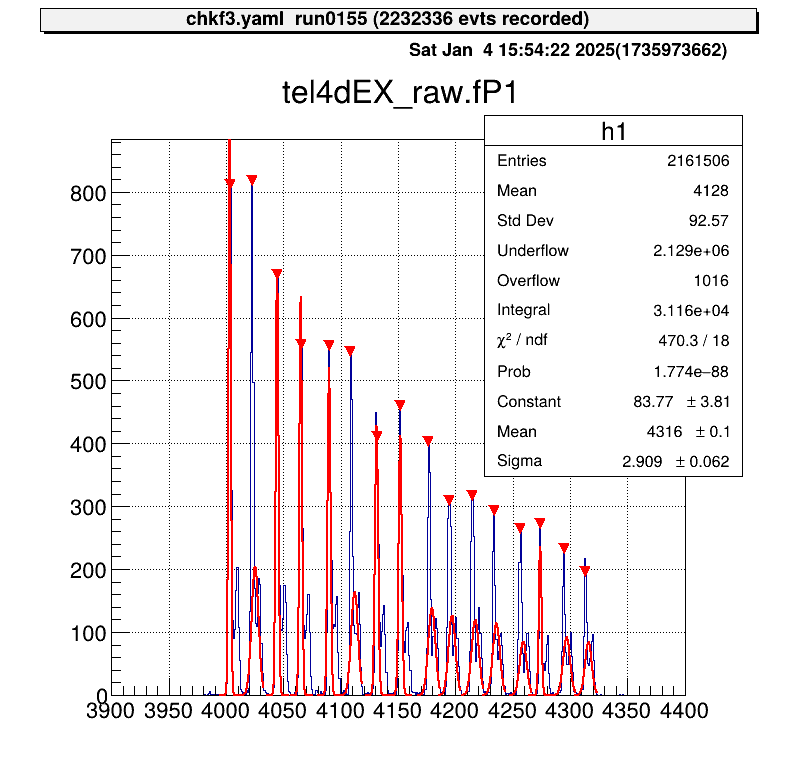

Below is an example of corrected data after subtracting tref:

The trigger signal now aligns at nearly the same channel. Without this correction, V1190-specific uncertainties degrade resolution, making this correction essential.

Any signal can be used as a

tref, but it must be recorded some signals for all events. For simplicity, the trigger signal is often used astref.

Applying the Correction in Steering Files

The correction is implemented using the steering/tref.yaml file, which is maintained separately for easy reuse.

An example configuration is shown below:

Processor:

# J1 V1190A

- name: proc_tref_v1190A_j1

type: art::TTimeReferenceProcessor

parameter:

# [[device] [focus] [detector] [geo] [ch]]

RefConfig: [12, 2, 7, 0, 0]

SegConfig: [12, 2, 7, 0]

Tref Processor Workflow

- Use the

art::TTimeReferenceProcessor. - Specify

RefConfig(tref channel) andSegConfig(target module). - The processor subtracts the channel specified in

RefConfigfrom all data in the module identified bySegConfig.

Refer to the ID scheme to correctly configure RefConfig and SegConfig for your DAQ setup.

Adding the Tref Processor to the Main Steering File

Include the tref processor in the main steering file using the include keyword.

Ensure the tref correction is applied before processing other signals. For example:

Anchor:

- &input ridf/@NAME@@NUM@.ridf

- &output output/@NAME@/@NUM@/hoge@NAME@@NUM@.root

- &histout output/@NAME@/@NUM@/hoge@NAME@@NUM@.hist.root

Processor:

- name: timer

type: art::TTimerProcessor

- name: ridf

type: art::TRIDFEventStore

parameter:

OutputTransparency: 1

InputFiles:

- *input

SHMID: 0

- name: mapper

type: art::TMappingProcessor

parameter:

OutputTransparency: 1

# Apply tref correction before other signal processing

- include: tref.yaml

# Process PPAC data with corrected timing

- include: ppac/dlppac.yaml

- name: outputtree

type: art::TOutputTreeProcessor

parameter:

FileName:

- *output

PPAC Calibration

MWDC Calibration

The current author (okawak) is not familiar with the MWDC, so please wait for further information from someone else.

Alpha-Source Calibration

MUX Calibration

Starting with the 2025 version, the structure of MUX parameter files and their loading method have been updated. Please note that these changes are not backward-compatible with earlier versions.

At CRIB, we use the MUX module by Mesytec. This multiplexer circuit is designed for strip-type Si detectors and consolidates five outputs:

- Two energy outputs (

E1,E2), - Two position outputs (

P1,P2) for identifying the corresponding strip, - One timing output (

T) from the discriminator.

The MUX can handle up to two simultaneous hits per trigger, outputting them as E1, E2, and P1, P2.

In single-hit events, E2 and P2 remain empty.

Currently, handling for E2 and P2 outputs is not implemented.

In practice, most Si detector events involve a single hit per trigger, and coincidence events have not posed a problem.

If you need to process E2 and P2, additional handling must be implemented.

This guide explains how to process data using the MUX in detail.

Map File

Since the five outputs are processed as a single set, the segid in the map file is written in five columns.

Update the mapper.conf as follows:

conf/map/ssd/tel_dEX.map 5

In the map file, list the segid values in the order [E1, E2, P1, P2, T]:

# Map: MUX [ene1, ene2, pos1, pos2, timing]

#

#--------------------------------------------------------------------

40, 0, 12 1 6 4 16, 12 1 6 4 17, 12 1 6 4 18, 12 1 6 4 19, 12 2 7 0 70

In this example, you can access these data sets using catid 40.

Checking Raw Data

To inspect raw data, use the art::crib::TMUXDataMappingProcessor:

Processor:

- name: MyTMUXDataMappingProcessor

type: art::crib::TMUXDataMappingProcessor

parameter:

CatID: -1 # [Int_t] Category ID

OutputCollection: mux # [TString] Name of the output branch

Key Parameters

CatID: Thecatidspecified in the map file.OutputCollection: The name of the output branch.

Accessing TMUXData Type

| Name | Variable | Getter |

|---|---|---|

| E1 | fE1 | GetE1() |

| E2 | fE2 | GetE2() |

| P1 | fP1 | GetP1() |

| P2 | fP2 | GetP2() |

| T | fTiming (first hit) | GetTrig() |

| T | fTVec[idx] (timing array) | GetT(idx) |

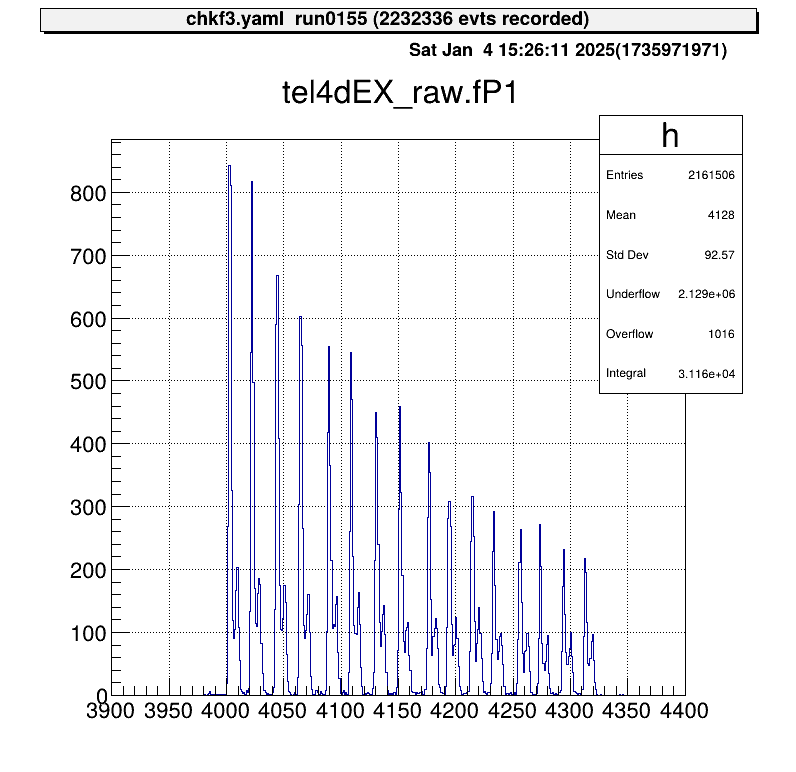

For example, to examine the P1 position signal:

artlogin <username>

a

artemis [] add steering/hoge.yaml NAME=xxxx NUM=xxxx

artemis [] res

artemis [] sus

artemis [] fcd 0

artemis [] zo

artemis [] tree->Draw("mux.fP1")

The position output appears as discrete signals, with each peak corresponding to a strip number.

If the map file includes multiple rows:

40, 0, 12 1 6 4 16, 12 1 6 4 17, 12 1 6 4 18, 12 1 6 4 19, 12 2 7 0 70

40, 1, 12 1 6 4 20, 12 1 6 4 21, 12 1 6 4 22, 12 1 6 4 23, 12 2 7 0 71

The output will be a two-element array.

To process this further, use art::TSeparateOutputProcessor to split the array into individual elements.

The YAML array index corresponds to the row in the map file:

Processor:

- name: MyTSeparateOutputProcessor

type: art::TSeparateOutputProcessor

parameter:

InputCollection: inputname # [TString] name of input collection

OutputCollections: # [StringVec_t] list of name of output collection

- mux1

- mux2

Calibration

What Is MUX Calibration?

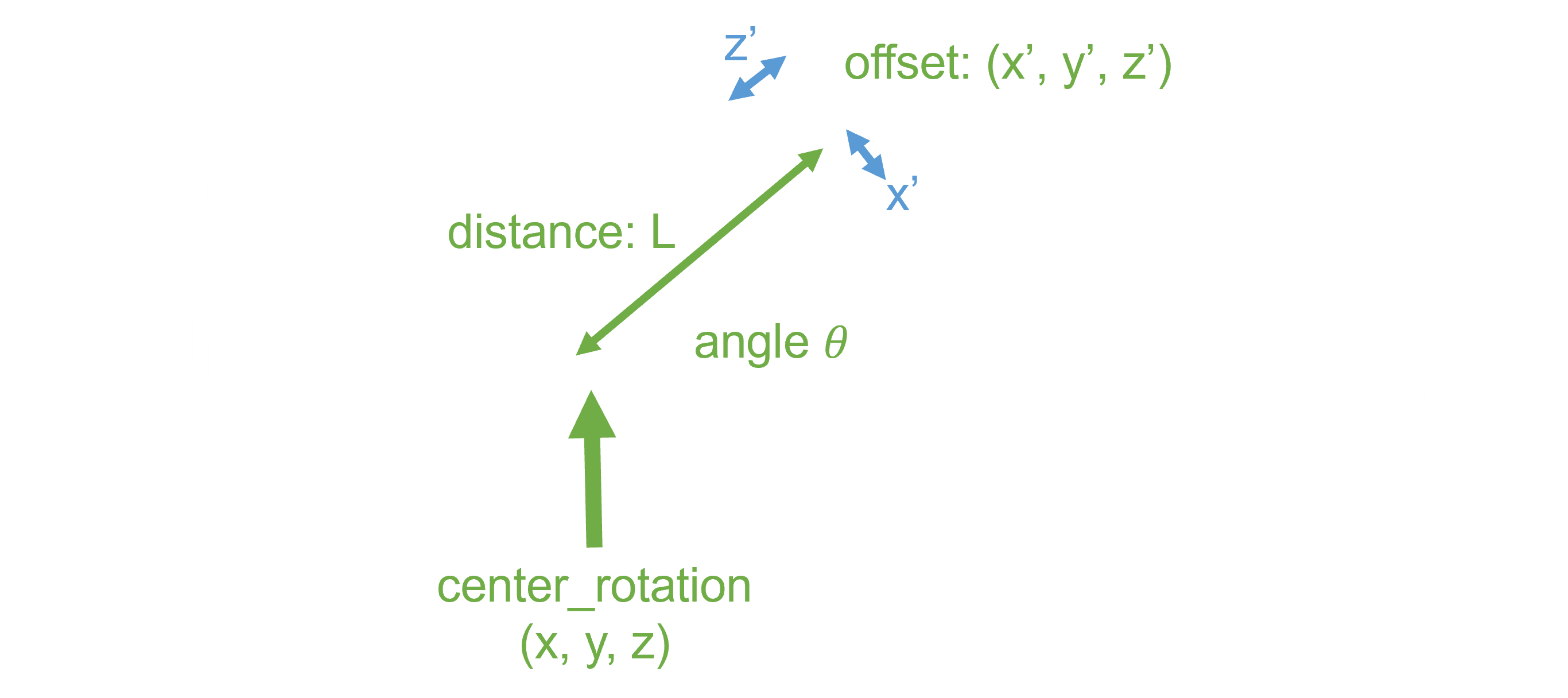

To calibrate a detector using a MUX circuit, the position output must be mapped to its corresponding strip, and each event assigned to the correct strip.

In the current method, as illustrated, an event falling into two adjacent regions (from the left) is assigned to the corresponding x-th strip.

The goal of MUX calibration is to determine the red boundary values in the figure and save them in a parameter file.

Calibration Macros

To streamline the MUX calibration process, two macros are provided:

macro/run_MUXParamMaker.C: Runs the calibration macro and logs its execution.macro/MUXParamMaker.C: Contains the core function for performing MUX calibration.

The main calibration function, defined in macro/MUXParamMaker.C, requires the following arguments:

h1: Histogram object.telname: Telescope name for specifying the output directory.sidename: Indicates whether it’s the X or Y direction strip ("dEX"or"dEY"), also used for directory naming.runname、runnum: Used to generate the output file name to distinguish between different measurements.peaknum: Number of expected peaks (currently assumes 16 strips).

In macro/run_MUXParamMaker.C, use the ProcessLine() function to define calibration commands:

void run_MUXParamMaker() {

const TString ARTEMIS_WORKDIR = gSystem->pwd();

const TString RUNNAME = "run";

const TString RUNNUM = "0155";

gROOT->ProcessLine("fcd 0");

gROOT->ProcessLine("zone");

gROOT->ProcessLine("tree->Draw(\"tel4dEX_raw.fP1>>h1(500,3900.,4400.)\")");

gROOT->ProcessLine(".x " + ARTEMIS_WORKDIR + "/macro/MUXParamMaker.C(h1, \"tel4\", \"dEX\", \"" + RUNNAME + "\", \"" + RUNNUM + "\", 16)");

}

This macro records the calibration conditions.

To use it:

artemis [] add steering/hoge.yaml NAME=xxxx NUM=xxxx

artemis [] res

artemis [] sus

artemis [] .x macro/run_MUXParamMaker.C

Gaussian fitting is performed on each peak, and the parameter file is saved automatically.

Applying Parameters

Parameter files are stored in directories like prm/tel[1,2,...]/pos_dE[X, Y]/.

To simplify access, predefined steering files use a symbolic link called current.

By changing this symbolic link, you can switch parameter files without modifying the steering file.

Use the setmuxprm.sh script to manage these symbolic links.

This script requires gum and realpath.

Run the script interactively to create a current link pointing to the desired parameter file:

./setmuxprm.sh

Verifying Parameters

To verify the parameters, use the macro/chkmuxpos.C macro.

- Generate a histogram of

P1in Artemis. - Overlay the boundary lines from the current parameter file.

artlogin <username>

a

artemis [] add steering/hoge.yaml NAME=xxxx NUM=xxxx

artemis [] res

artemis [] sus

artemis [] fcd 0

artemis [] tree->Draw("mux.fP1>>h1")

To draw the boundaries on the histogram h1:

artemis [] .x macro/chkmuxpos.C(h1, "telname", "sidename")

Arguments:

h1: Histogram object.telname: Telescope name, used for locating the parameter file.sidename: Specify"dEX"or"dEY", also used for locating the parameter file.

This visual representation helps confirm that each peak aligns with its designated region.

Energy Calibration

Energy calibration is performed on a strip-by-strip basis, so strip assignment must be completed beforehand.

Parameter Objects

To load the necessary parameters into Artemis and use them in a processor, employ the art::TParameterArrayLoader:

Processor:

- name: proc_@NAME@_dEX_position

type: art::TParameterArrayLoader

parameter:

Name: prm_@NAME@_dEX_position

Type: art::crib::TMUXPositionConverter

FileName: prm/@NAME@/pos_dEX/current

OutputTransparency: 1

Processor Parameters:

Name: Specifies the name of the parameter object.Type: Specifies the class type of the parameter.FileName: Path to the parameter file.OutputTransparency: Set to 1 since parameter objects do not need to be saved in ROOT files.

Strip Assignment with TMUXCalibrationProcessor

Strip assignment is handled using the art::crib::TMUXCalibrationProcessor:

Processor:

- name: MyTMUXDataMappingProcessor

type: art::crib::TMUXDataMappingProcessor

parameter:

CatID: -1 # [Int_t] Category ID

OutputCollection: mux_raw # [TString] Name of the output branch

- name: MyTMUXCalibrationProcessor

type: art::crib::TMUXCalibrationProcessor

parameter:

InputCollection: mux_raw # [TString] Array of TMUXData objects

OutputCollection: mux_cal # [TString] Output array of TTimingChargeData objects

ChargeConverterArray: no_conversion # [TString] Energy parameter object of TAffineConverter

TimingConverterArray: no_conversion # [TString] Timing parameter object of TAffineConverter

PositionConverterArray: prm_@NAME@_dEX_position # [TString] Position parameter object of TMUXPositionConverter

HasReflection: 0 # [Bool_t] Reverse strip order (0--7) if true

Note: In CRIB, the Y-direction strip numbering for silicon detectors differs between geometric and output pin order. Set

HasReflectiontoTrueto reverse the strip order and align it with the natural geometric sequence.

The PositionConverterArray parameter is mandatory for strip assignment.

Energy and timing converters are optional; if left unspecified, the raw values are returned.

Performing Energy Calibration

The objects output by art::crib::TMUXCalibrationProcessor (mux_cal) are of type art::TTimingChargeData.

The fID field corresponds to the detid (i.e., the strip number).

Therefore, energy calibration can be conducted in a manner similar to the Alpha Calibration section.

Important: Perform energy calibration using the output from the calibration processor, not the mapping processor.

Summary

- MUX Calibration: Aligns the position output with the corresponding strips by determining and storing boundary values in parameter files.

- Parameter Loading: Use

art::TParameterArrayLoaderto load parameters for strip assignment into Artemis. - Strip Assignment: Employ

art::crib::TMUXCalibrationProcessorto complete strip assignment before performing energy calibration. - Calibration Workflow:

PositionConverterArrayis required for strip assignment.- Energy and timing converters are optional; raw values are used if not specified.

- Energy Calibration: Conducted on the processor output to ensure proper alignment of detector strips.

Geometry Setting

Git Operations (option)

CRIB Own Configuration

Analysis Environments

Online-mode Analysis

User Config

New Commands

Minor Change

Online Analysis

F1 Analysis

F2 Analysis

PPAC Analysis

MWDC Analysis

The current author (okawak) is not familiar with the MWDC, so please wait for further information from someone else.

Telescopes Analysis

F3 Analysis

Raw Data Check

Gate Processor

Shifter Tasks

Scaler Monitor

TimeStamp Treatment

Creating New Processors

The source code in artemis is categorized into several types.

The names below are unofficial and were introduced by okawak:

- Main: The primary file for creating the executable binary for artemis.

- Loop: Manages the event loop.

- Hist: Handles histograms.

- Command: Defines commands available in the artemis console.

- Decoder: Manages decoders and their configurations.

- EventStore: Handles events used in the event loop.

- EventCollection: Manages data and parameter objects.

- Processor: Processes data.

- Data: Defines data structures.

- Parameter: Manages parameter objects.

- Others: Miscellaneous files, such as those for artemis canvas or other utilities for analysis.

While users are free to customize these components, this chapter focuses on the Processor and Data categories, as these are often essential for specific analyses.

This chapter provides a step-by-step demonstration of creating a new processor and explains art::TRIDFEventStore and art::TMappingProcessor in detail, a crucial component for building processors.

Contents

-

A brief introduction to

EventStore. This section does not cover how to create or use a newEventStore. -

An overview of key concepts shared by all processors.

-

Explains

segdataproduced byart::TRIDFEventStoreand demonstrates how to use it to build a new processor. -

Describes Mapping Processors, which map data based on

catdataoutput fromart::TMappingProcessor. This section also provides a detailed explanation ofcatdata. -

Explains how to define new data classes. In artemis, specific classes are typically stored as elements in ROOT's

TClonesArray. This section details how to define data structures for this purpose. -

Explains how to define parameters for data analysis, either as member variables within a processor or as standalone objects usable across multiple processors.

EventStore

For previous CRIB analyses, the following three types of EventStore provided by artemis have been sufficient: